Mastering the LLM Ecosystem: A Strategic Roadmap for Business Dominance Using AI Services in 2026

In 2026, winning with AI means choosing what intelligence to own vs. rent. Explore the LLM ecosystem, AI agents, and the shift from proprietary models like OpenAI to open-source leaders like Meta—and learn how to scale smarter with AI-powered digital workforces.

In the boardroom, 2026 isn't about asking if you should use AI, it’s about deciding which part of the "intelligence" you want to own and which part you’re okay with renting.

The initial wave of "chatting with documents" has matured into a complex, high-stakes LLM ecosystem. For a growth-focused owner, this ecosystem is the ultimate force multiplier.

It’s the difference between a business that hits a ceiling because you can't hire fast enough and one that scales infinitely because your operations are powered by a digital workforces using AI services that never sleeps, never calls in sick, and gets smarter with every transaction.

What Exactly is the LLM Ecosystem?

The Large Language Model (LLM) ecosystem is essentially a high-performing engine bay. The model is the engine. However, for your car to win, you need the right fuel (data), a sophisticated GPS (retrieval systems), and a driver who knows the track (AI agents).

In simple terms, it is a network of tools and technologies that allow AI services to move from cool party trick to critical business infrastructure.

The Core Components You Need to Know:

.png)

Foundation Models: The "brains" (e.g., GPT-4, Gemini 3, Llama 4).

Vector Databases: The long-term memory where your business-specific knowledge lives.

RAG (Retrieval-Augmented Generation): It is the bridge connecting the brain to your private data, ensuring the AI services speaks your company’s truth, not hallucinated guesses.

AI Agents: These are workers that don't just talk. They carry out tasks that can trigger your CRM, update your inventory, or schedule a sales call.

From "General Chat" to "Vertical Dominance"

We’ve moved past the startup grind where "good enough" AI services were okay. To stay ahead, your AI solutions must be precise. The most significant shift in the ecosystem is the move from general-purpose tools to Vertical-Specific AI.

Industry-Specific Breakthroughs:

Legal & Finance: Beyond just summarizing; these machine learning services can now handle complex contract analysis and real-time fraud detection with higher accuracy than a human junior associate.

AI Coding Assistants: Your dev team isn't just writing code. They're managing AI co-pilots that handle the syntax while they focus on architecture. This is how you build software 5x faster.

Information Discovery: Traditional search is dead. Generative AI services has replaced it with Discovery Engines that synthesize your company’s entire knowledge base into instant, actionable answers.

Capability | Old Way (Manual/Legacy) | The MoogleLabs Way (AI-Powered) |

|---|---|---|

Customer Support | 9-to-5, high turnover, slow response. | 24/7 sentiment-aware agents handling 80% of queries. |

Creative Content | Weeks of drafting and revisions. | Instant, brand-aligned text, video, and audio assets. |

Business Strategy | Monthly reports and "gut feeling." | Real-time predictive insights from artificial intelligence services. |

Data Analysis | Manual spreadsheets, monthly reports. | Real-time predictive insights and "demand sensing." |

Operations | Repetitive tasks eat 40% of staff time. | Autonomous workflows that handle the "boring stuff." |

Growth | Scaling requires linear hiring. | Scaling requires only more compute power. |

Choosing Your Weapon: Open-Source vs. Proprietary Models

A major decision for any decision-maker in 2026 is the "Buy vs. Build" dilemma. Through our AI services, we often see two distinct paths:

1. Proprietary Models (The "Rented" Intelligence)

Models like OpenAI’s GPT-5 or Google’s Gemini 3 are incredibly powerful. They are the tools that are ready to go out of the box.

Pros:

Immediate deployment

Top-tier reasoning

Cons:

Usage-based costs can scale quickly

You are bound by their privacy rules.

2. Open-Source Models (The "Owned" Intelligence)

With the rise of Llama 4 and Mistral Large, businesses are now choosing to host their own models.

Pros:

Total data privacy (data never leaves your servers),

No "per-message" fees, and custom fine-tuning.

Cons:

Requires expert AI/ML development to set up and maintain.

Expert Tip: Many "Growth-Focused Owners" are now adopting a Hybrid Stack. Use a big, proprietary model for complex strategy and a smaller, custom-tuned open-source model for high-volume, repetitive tasks.

The Rise of the Tool-Using AI Agent

The most exciting development in the current AI services ecosystem is the transition from passive chatbots to Autonomous AI Agents. These aren't just assistants; they are "workers" capable of using your existing software stack.

What makes an Agent different?

Planning: They turn complex goals into smaller and achievable steps.

Tool Integration: The agents can log into your CRM, trigger a Zapier workflow, or update a project in Jira.

Feedback Loops: Ability to learn from errors and adjust their approach without you having to re-prompt them, makes agents excellent for companies.

By integrating AI development services that focus on agents, you aren't just adding a feature, you're adding a department.

Emerging Categories: Multimodal and Embodied AI

The AI services ecosystem is no longer restricted to text. In 2026, we are seeing the emergence of:

Multimodal Intelligence: AI that understands and generates video, audio, and images simultaneously. Imagine an AI that watches your security footage and drafts a safety report in real-time.

Scientific Discovery: AI that predicts protein structures and accelerates drug development, taking years off the R&D cycle.

Synthetic Data Enhancement: Using AI to create high-quality training data where real-world data is scarce or sensitive.

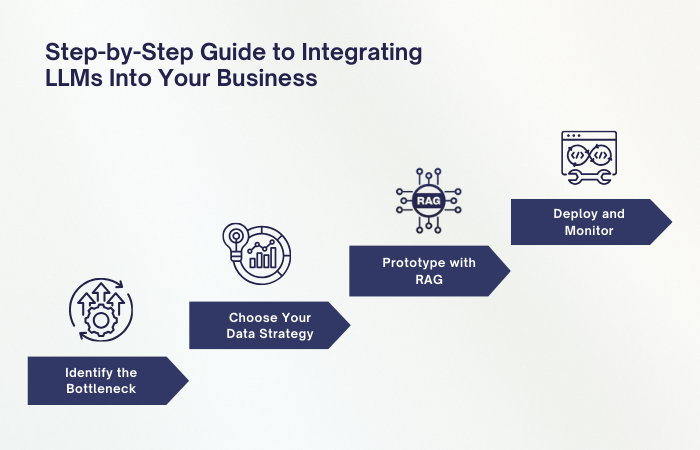

How to Integrate LLMs Into Your Business: A Step-by-Step Guide

If you're looking for artificial intelligence services that actually move the needle, don't start with the tech. Start with the "leak."

Step 1: Identify the Bottleneck

Where is your team stuck? Is it answering the same 50 customer questions? Is it manually drafting quotes? Break these "jobs" into discrete "tasks."

Step 2: Choose Your Data Strategy

AI services are only as good as the data it’s fed. Our internal guide on LLMs vs. Generative AI explains how to ensure your model doesn't just "generate" but actually "knows" your business.

Step 3: Prototype with RAG

Instead of expensive retraining, use Retrieval-Augmented Generation. It’s like giving the AI an open-book exam with your company handbook.

Step 4: Deploy and Monitor

This is where most consultants with binders fail. Real generative AI development requires constant monitoring to prevent drift and ensure the AI stays aligned with your brand voice.

The "Unfair" Advantage: AI Agents as Your Smartest Hire

We’re moving toward a Role-Based AI era. In 2026, we aren't just building chatbots; we're building digital employees. These agents can handle end-to-end processes, like a Lead Response Agent that doesn't just answer a question but qualifies the lead, checks your calendar, and books the meeting.

For more on where this is heading, check out our deep dive into AI/ML Breakthroughs for 2026.

Is your business running on a "horse and buggy" manual process while your competitors are installing turbo engines? The LLM ecosystem is your chance to outmaneuver the market. Whether you need a custom-built agent or a full-scale AI services strategy, we're the partner that brings the breakthrough.

Loading FAQs

Please wait while we fetch the questions...