Enterprise AI Risk Management Framework: A Practical Guide

AI is no longer experimental—it’s operational and risky. This guide breaks down how enterprises can build a robust AI Risk Management Framework to tackle bias, drift, and compliance challenges while aligning with the EU AI Act and accelerating secure, scalable innovation.

By 2026, the experimentation phase for AI is over. But as systems gain autonomy, they also gain new ways to fail. While 75% of leaders are worried about AI risks, few have turned that concern into a technical defense.

A functional AI Risk Management Framework isn't just for the risk-averse, it’s the infrastructure that keeps your innovation from becoming a headline. With the EU AI Act enforcement hitting on August 2, 2026, moving from watching to acting is no longer optional.

Standard IT security isn't enough. You need a roadmap for the unique ways neural networks break, from logic drift to data poisoning. This guide shows you how to build a framework that protects your brand while actually accelerating ROI.

What is AI Risk Management?

AI risk management is the systematic process of identifying, assessing, and mitigating the unique threats posed by artificial intelligence throughout its entire lifecycle. Traditional software follows fixed logic. Whereas AI systems are probabilistic. They learn, adapt, and if left unguided, they can drift away from their intended purpose.

Use of an AI Risk Management Framework means following a more proactive governance model. It involves a combination of technical safeguards. Machine learning services that monitor for bias, and organizational policies that define who is accountable when an autonomous agent makes a high-stakes decision are examples of AI risk management framework.

The Real-World Stakes in 2026

As per a recent survey from Gallagher, 82% of enterprises report early positive impact of AI adoption. However, only half of them are making any significant investment in developing governance frameworks to address AI risks.

This gap is where projects stall.

Without a clear strategy, shadow AI (unmanaged tools used by employees) can lead to data leaks, while high-risk models can trigger massive regulatory fines under the EU AI Act.

Examples of AI Risk Management Failures That Led to Shutdown of AI Tools

Tay Chatbot (2016): Microsoft Tay Chatbot for Twitter (now X), designed to learn from user interactions. It lacked safety filters and prompt guardrails, and users taught the bot to create racist, sexist, and offensive content. It was shut down within 16 hours of release.

Currently, Grok is also going through the same problem, where users were prompting it to create explicit images. They have put in AI safety measures to address the issues, but improvements are necessary for added compliance. xAI has announced that they will sign the safety chapter of EU’s Code of Practice for generative AI.

Amazon’s AI Hiring Tool (2018): This tool was made to screen job applicants, but they were quick to abandon it once they realized that it penalized resumes with the word “women’s” in them.

AI Risk Management: The Four Pillars of Failure

To manage AI effectively, decision-makers must look beyond standard cybersecurity. A comprehensive AI Risk Management Framework addresses these four categories of risk:

Data Risks

This includes everything from PII (Personally Identifiable Information) leaks to data poisoning, where malicious inputs corrupt a model's training set. At MoogleLabs, we address these through specialized blockchain development services to ensure data integrity and provenance.

Model Risks

These are internal flaws, such as hallucinations or model drift. Data issues, model development errors due to incorrect assumptions, flawed mathematical logic, or inappropriate methodology; implementation failures are other key components and sources of model risk.

Operational Risks

These occur when AI systems lack a human-in-the-loop or fail to integrate with existing infrastructure. Using a professional DevOps service to automate testing is often the best defense here.

Ethical & Legal Risks

This covers algorithmic bias that leads to discriminatory outcomes, a major focus for the healthcare AI solutions we develop to ensure patient equity.

By defining these risks early, you convert unpredictable AI into a governed corporate asset. This transition is essential for any enterprise looking to deploy agentic AI solutions that can act on behalf of the company without constant manual supervision.

AI Risk Management Framework: Key Components and Strategies

AI Risk Management Framework must be built on technical and operational components that address the full model lifecycle. In 2026, bolting on safety after a model is built is a recipe for failure. A professional AI Risk Management Framework requires a deep integration of artificial intelligence services with governance protocols.

A Successful Strategy for AI Risk Management Include:

Risk Identification & Inventory: Creating a Single Source of Truth for every model in your network. This includes third-party APIs and internal experiments.

Risk Categorization: Not every model needs the same level of scrutiny. We rank systems: High, Medium, or Low, based on how much they impact human safety, data privacy, or financial stability.

Automated Risk Mitigation Controls: You need real-time guardrails. These act as digital filters, scanning inputs for prompt injections and outputs for bias or data leaks before they ever reach a user.

Continuous AI Monitoring: Using observability tools to detect model drift, the slow decay of accuracy as real-world data shifts away from the original training set.

Incident Response for AI Systems: A dedicated workflow for 72-hour regulatory reporting and manual Kill Switches to isolate malfunctioning agents instantly.

By embedding these layers directly into your DevOps service pipeline, you ensure every update to your AI is automatically vetted against your AI Risk Management Framework standards. It’s the only way to scale without crossing a liability line.

The Global Compliance Landscape: NIST, EU AI Act, and Beyond

Navigating the legalities of 2026 requires aligning your AI Risk Management Framework with several international standards. This is no longer optional; for many, it is the price of market entry.

The NIST AI RMF 1.0: The Gold Standard

The National Institute of Standards and Technology (NIST) provides the most influential AI Risk Management Framework. It is organized into four primary functions:

Govern: Cultivating a culture of risk awareness and assigning clear accountability.

Map: Understanding the context and dependencies of each AI deployment.

Measure: Using quantitative metrics to track bias, accuracy, and reliability.

Manage: Deploying the actual technical controls to mitigate identified threats.

The EU AI Act & August 2, 2026

The EU AI Act is the world’s first comprehensive AI law. By August 2, 2026, enterprises deploying High-Risk AI in the EU, such as tools for recruitment or credit scoring, must prove their AI Risk Management Framework meets strict transparency and safety benchmarks. Failure to comply can result in fines up to €35 million or 7% of global turnover.

ISO/IEC Standards & U.S. Executive Orders

Further layers of the AI Risk Management Framework include ISO/IEC 42001 (the AI Management System standard) and recent U.S. Executive Orders that mandate safety testing for powerful foundation models.

At MoogleLabs, we specialize in generative AI services in regulatory compliance to help enterprises align with these shifting global mandates.

AI in Risk Management vs. AI Risk Management: Understanding the Difference

Both using AI as a tool and managing AI as a risk require high-level machine learning services, their objectives, technical controls, and oversight protocols are fundamentally different.

The table breaks down how a modern AI Risk Management Framework addresses these two distinct disciplines:

Feature | AI in Risk Management | AI Risk Management |

|---|---|---|

Role of AI | The Solution: AI is the tool used to identify traditional business threats. | The Subject: AI is the asset that needs to be secured and governed. |

Primary Objective | To automate and improve the accuracy of risk detection (e.g., fraud or credit defaults). | To ensure the AI system itself is safe, unbiased, and compliant with the EU AI Act. |

Key Technical Focus | Pattern recognition, anomaly detection, and predictive analytics. | AI safety, explainability (XAI), and preventing model drift. |

Common Example | Using deep learning fraud detection to scan millions of financial transactions. | Implementing guardrails to prevent a financial bot from giving unauthorized advice. |

Source of Risk | Market volatility, external fraud, or operational failures. | Model hallucinations, data poisoning, or algorithmic bias. |

Implementation Layer | Integrated into business logic and financial reporting workflows. | Embedded into the DevOps service and MLOps pipeline for continuous oversight. |

Outcome Goal | Reduced financial loss and improved operational efficiency. | Trustworthy AI that meets NIST AI RMF and OECD AI Principles. |

For any enterprise scaling AI agent development, navigating both sides of this table is mandatory. You need the predictive power of AI to stay competitive, but you also need a robust AI Risk Management Framework to ensure those autonomous tools don't create new liabilities.

The most resilient companies are those that treat these two functions as a unified "Trust, Risk, and Security" (AI TRiSM) strategy.

Strategic Risk Mitigation: The 2026 Blueprint

An effective AI Risk Management Framework identifies vulnerabilities before they impact the bottom line. By categorizing these threats, enterprises can deploy specific artificial intelligence services to neutralize them.

Core Risks to Mitigate

Algorithmic Bias: Skewed training data leading to discriminatory outcomes. This is a critical aspect to address to ensure compliance with the EU AI Act.

Model Hallucinations: Factually incorrect outputs that create legal and brand liability.

Data Poisoning: Malicious corruption of training sets to create backdoors.

Model Drift: The decay of machine learning services as real-world data shifts.

Privacy Leaks: Unintended exposure of PII through model responses.

Mitigation Techniques

RAG (Retrieval-Augmented Generation): Grounds the AI in a verified, private knowledge base. This is a staple in our agentic AI solutions.

Synthetic Data: Allows for training robust models without using sensitive, real-world customer data.

Governance-by-Design: Integrating risk assessments into the initial DevOps service pipeline.

Technical Controls & Business Benefits

Implementing an AI Risk Management Framework transforms unpredictable AI into a governed corporate asset.

5 Essential Technical Controls

Explainability (XAI): Opening the Black Box to show the rationale behind model decisions is vital for healthcare AI solutions.

Drift Monitoring: Automated flight controllers that alert teams when model logic begins to decay.

Red Teaming: Proactive adversarial testing to find vulnerabilities like prompt injection.

Generative Guardrails: Real-time filters that redact PII and block unauthorized topics, essential for a B2A strategy.

Human-in-the-Loop (HITL): A manual Kill Switch for high-stakes financial or medical authorizations.

The Enterprise ROI of Risk Management

Benefit | Impact |

|---|---|

Regulatory Resilience | Avoids fines (up to 7% of turnover) under the EU AI Act. |

Stakeholder Trust | Increases adoption rates for new AI agent development projects. |

Cost Efficiency | Early detection of bias or drift is 10x cheaper than post-deployment fixes. |

Global Scaling | Provides a standardized safety blueprint for entering new markets. |

Strategic Best Practices for 2026

Moving from AI pilots to enterprise infrastructure needs a shift in governance. These eight practices ensure your artificial intelligence services remain assets in 2026:

AI as Infrastructure: Apply the same uptime and security rigor to AI as you do to core databases.

Independent Validation: Use third-party audits to eliminate internal blind spots in machine learning services.

Audit-Ready Documentation: Track data lineage and training parameters to meet EU AI Act and ISO/IEC 42001 standards.

Automated Oversight: Use a dedicated DevOps service for real-time monitoring of model accuracy and fairness.

Regulatory Alignment: Sync your internal policies with NIST AI RMF and OECD AI Principles.

Clear Accountability: Assign a human owner to every model to oversee legal and ethical alignment.

Risk Simulations: Run War Games to test how AI agent development projects handle adversarial inputs.

Executive AI Literacy: Ensure leadership understands the nuances of bias, safety, and hallucinations.

Measuring Performance: The Compliance Dashboard

A data-driven AI Risk Management Framework replaces gut feelings with verifiable KPIs during audits:

Metric | Business Focus |

|---|---|

Model Accuracy | Performance against verified baselines. |

Error Rates | Balancing False Positives/Negatives in deep learning fraud detection. |

Bias Parity | Quantifying disparate impact across demographic groups. |

Drift Indicators | Measuring the distance between current behavior and original training data. |

Incident Frequency | Tracking guardrail triggers and manual kill switch activations. |

Audit Readiness | Percentage of internal controls meeting NIST AI RMF standards. |

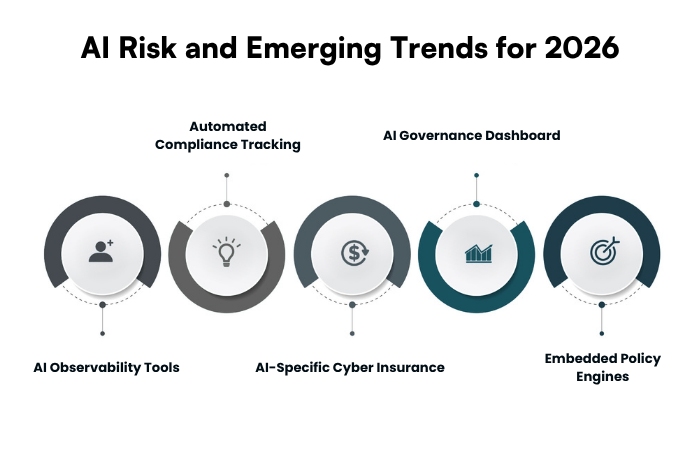

AI Risk and Emerging Trends for 2026

In 2026, the AI Risk Management Framework is becoming more automated, integrated, and invisible. The following trends are defining the next phase of enterprise AI:

AI Observability Tools: It is where tools explain why a model is drifting before it impacts the user.

Automated Compliance Tracking: Software that maps your model behavior to the EU AI Act in real-time, generating compliance reports on demand.

AI-Specific Cyber Insurance: The rise of insurance products that specifically cover algorithmic failure or hallucination-led liability.

AI Governance Dashboards: A centralized Control Tower for the C-suite to view the safety status of all agentic AI solutions across the organization.

Embedded Policy Engines: Hard-coded ethical rules that prevent an agent from taking unauthorized actions, regardless of the user's prompt.

The future of risk management is agentic AI workflows that can self-heal and self-correct. By staying ahead of these trends, you ensure your enterprise is ready for the emerging trends in AI technology that will dominate the late 2020s.

AI Risk Management – The Gateway to Innovation

In 2026 AI Risk Management Framework is the infrastructure that allows your enterprise to move faster with certainty. By addressing vulnerabilities like model drift to algorithmic bias, early in the lifecycle, you transform AI from an unpredictable experiment into a governed corporate asset.

The transition toward agentic AI enterprise use cases 2026 requires a shift in how we view oversight. We must build them to be self-healing and inherently compliant. Whether you are aligning with the NIST AI RMF or preparing for the EU AI Act deadline, a structured approach ensures your innovation remains secure, ethical, and scalable.

At MoogleLabs, we specialize in everything ranging from agentic AI solutions to secure machine learning services. Our team ensures that your technology is a safe driver of ROI.

Loading FAQs

Please wait while we fetch the questions...