AI Safety in Industry Applications: Healthcare, FinTech, and Autonomous Systems

As global AI regulations take effect in 2026, industries like healthcare, FinTech, and autonomous systems must prioritize AI safety. Learn how Safe-by-Design practices ensure compliance, transparency, and reliability in critical AI applications.

By 2026, the move fast and break things era of AI has ended. For highly regulated sectors, AI safety in industry is now the primary driver of digital trust and regulatory compliance.

As the August 2, 2026, enforcement of global standards nears, enterprises are shifting from experimental pilots to Safe-by-Design production environments.

Implementing AI Safety in healthcare, fintech, and autonomous systems is no longer a choice but a foundational requirement for market participation.

AI Safety in Healthcare, FinTech & Autonomous Systems

The application of AI safety in industry varies significantly depending on the blast radius of a potential failure. Below is a breakdown of how AI development services are currently being deployed to address AI safety challenges in regulated industries.

1. AI Healthcare: Precision & Clinical Grounding

In clinical settings, Enterprise AI safety in industry is measured in patient outcomes. The focus is on preventing automation bias while maintaining high-speed diagnostic support through Responsible AI in healthcare and financial systems.

Use Case of AI in Healthcare:

AI-driven diagnostics, surgical robots, treatment planning, and nursing documentation among others.

Risk Areas

Algorithmic Bias: Unrepresentative data training of AI models can increase health disparities among patient populations.

Overreliance & Liability: There is a psychological risk where even seasoned experts begin to defer to the machine. This automation bias creates a massive liability loop when technology-generated errors go unchallenged by human oversight.

Patient Safety & Clinical Risk: AI hallucinations can result in inaccurate diagnostics, false information, or improper recommendations, leading to incorrect treatment.

Data Privacy & Security: Large datasets used for training raises risks of security breaches, violating regulations like HIPAA and GDPR.

Safety Protocol:

Retrieval-Augmented Generation (RAG) ensures Generative AI Services pull data from verified medical journals rather than internal memory. This contributes to the AI safety in industry efforts for all applications.

Our AI-powered skill evaluation platform uses this same validation logic to ensure professional competency assessments are auditable and objective.

2. AI FinTech: Explainability & Fraud Prevention

In finance, AI safety in industry is synonymous with transparency. If a model denies a loan or flags a trade, the why must be clear to both the user and the regulator. AI risk management in finance requires opening the Black Box.

Potential Use Case:

Autonomous lead scoring, fraud detection, predictive analytics use cases in FinTech, and AI-powered asset management platforms.

Risk Areas

The "Black Box" Problem: Algorithms that do not offer explanation for their decisions can be unpredictable. For AI safety in industry to be effective, models must be transparent, so auditors can verify the logic behind high-stakes loan approvals or rapid trading shifts.

Data Bias & Ethical Risks: AI mirrors its training data. Hence, when trained on skewed historical information, it will automate systemic discrimination, damaging your brand's integrity.

Data Poisoning: This is a sophisticated security threat where malicious actors teach a model to ignore specific theft patterns. This creates hidden backdoors, allowing fraud to bypass even the most advanced AI Safety protocols.

Operational & Systemic Risk: Relying too heavily on one model creates a dangerous single point of failure. In our interconnected 2026 markets, one flawed autonomous agent can trigger a flash crash or widespread systemic instability.

Security & Regulatory Compliance: Financial systems are primary targets for adversarial attacks. Failing to secure these models will result in heavy non-compliance penalties under the EU AI Act and GDPR.

GenAI Specific Risks: Generative models used for customer advice can suffer from Hallucinations. Providing confident but factually incorrect financial guidance is a direct path to legal liability.

Safety Protocol:

Explainable AI (XAI) & Adversarial Testing: We use these to open the Black Box, providing a clear rationale for every decision.

Our deep learning fraud detection services utilize this to ensure that security measures are resilient against evolving cyber threats.

LCNC Integration:

Integrating low-code no-code application development with Safe Templates further allows non-technical staff to build compliant tools without risking Shadow AI vulnerabilities.

3. AI Autonomous Systems: Real-Time Fail-Safes

In the physical world, AI safety in industry is measured in milliseconds. Reliability must be embedded into the hardware-software loop to prevent catastrophic real-world failure.

Potential Use Case: Drowsy driving detection, warehouse robotics, and autonomous drone delivery.

Risk Areas

Sensory Confusion: When LiDAR, radar, and cameras disagree, the AI hits a logic wall. This often manifests as erratic movements or "phantom braking," where the system stops for a threat that doesn't exist.

The Latency War: In autonomous systems AI safety, time is the enemy. A half-second processing delay isn't just a slow app. It’s the gap between a safe stop and a high-speed collision.

Hardware Hijacking: If there’s a gap in the AI development services pipeline, an attacker could potentially seize remote control of heavy machinery. In the wrong hands, a corporate asset becomes a physical threat.

The Wild Factor: AI thrives in clean data, but the world is messy. Blinding snow, heavy rain, or low-sun glare can cause model drift, where the system begins to misidentify or completely ignore obstacles.

Privacy & IP Theft: These agents are essentially roaming data centers. Without ironclad encryption, the environmental data they collect is a target for privacy leaks or the theft of your proprietary navigation logic.

The Ethics of the Split-Second: We are forcing machines to make trolley problem choices. If an algorithm inadvertently prioritizes certain behaviors over others in an accident scenario, the societal and brand fallout is immeasurable.

The Blame Gap: When an autonomous system fails, the legal path is often a maze. Without a clear Industry-Specific AI Safety Framework, your business is left with an infinite liability problem for every automated action.

Environmental Sabotage: Beyond digital hacking, there is physical hacking. A specifically placed sticker on a stop sign can trick a model's vision, proving that Security & Hijacking risks are often just an adhesive away.

Safety Protocol:

Edge-AI Latency Checks & Fail-Safe Defaults. By processing data at the edge, we eliminate cloud delays. Our SleepBleep project is a prime example, using real-time computer vision to monitor driver fatigue with zero-latency response triggers.

Furthermore, our agentic AI workflows include a mandatory Kill Switch, ensuring that if a system detects a sensor anomaly, it automatically enters a safe wait state rather than continuing operation.

AI Safety in Industry - Comparing Requirements (2026)

Industry | Primary Safety Goal | Technical Safeguard | Regulatory Focus |

|---|---|---|---|

Healthcare | Patient Equity & Accuracy | RAG & Clinical Grounding | HIPAA / EU AI Act (High-Risk) |

FinTech | Algorithmic Fairness | Explainable AI (XAI) | GDPR / NIST AI RMF |

Autonomous | Physical Safety | Real-time Fail-safes | ISO 26262 / Safety Act |

Core Principles for Safe Industrial AI Deployment

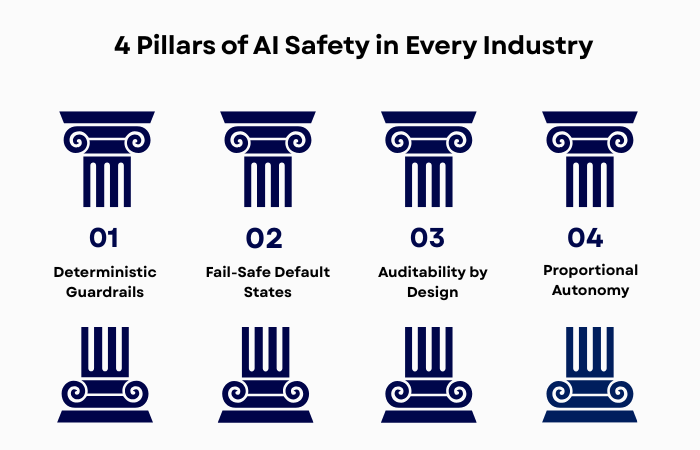

Transitioning from a lab to a factory floor requires AI safety in industry architecture. AI risk and safety in regulated industries require anchoring every deployment to four operational pillars.

The 4 Pillars of AI Safety in Every Industry

All sectors of the economy are now looking for ways to use AI to their advantage. Hence, AI safety in industry

Deterministic Guardrails: While AI models are probabilistic, their boundaries must be hard-coded. Define no-go zones where the AI is strictly prohibited from acting, regardless of its confidence score.

Fail-Safe Default States: If a sensor fails or a connection drops, the system must trigger a Zero Action state. A robotic arm should freeze, and a trading bot should halt, never continue a last known command.

Auditability by Design: Every decision must be logged in a tamper-proof environment. Using blockchain development services for these logs ensures an immutable trail for root-cause analysis during audits.

Proportional Autonomy: Deploy in stages. Start with Human-in-the-loop (suggestions only) and only move to full autonomy after the AI Risk Management Framework verifies 99.9% reliability.

Autonomy Levels & Oversight

Level | AI Role | Human Role | Risk Profile |

|---|---|---|---|

Augmentation | Suggestions | Execution | Low |

Supervised | Routine Tasks | Monitoring | Medium |

Autonomous | End-to-end | Audit | High |

Automating AI Safety in the Pipeline

Safety shouldn't stall your low-code no-code application development. Integrate these into your DevOps service:

Automated Red-Teaming: Every build is automatically tested against adversarial prompts.

Safety-Gated CI/CD: If a model's bias or hallucination rate exceeds limits, the deployment pipeline breaks.

Stress Simulations: Models must survive 10,000+ worst-case scenarios in a digital twin before touching real-world data.

The Role of Low-Code/No-Code in AI Safety

A significant shift in AI safety in high-risk industries such as healthcare, finance, and autonomous systems is the use of LCNC to bridge the Expert Gap.

Traditionally, a doctor or banker had to explain risks to a developer. Now, they build the tools themselves using Safe Blocks.

Automated Redaction: LCNC tools that strip PII (Personally Identifiable Information) before it hits a model.

Embedded Guardrails: Hard-coded ethical rules that prevent an agent from taking unauthorized financial or medical actions.

Audit-Ready Logs: Every click and decision is logged for 72-hour regulatory reporting.

This democratization ensures that AI Safety in Industry Use Cases are led by the people who understand the risks best.

Benefit Summary: AI Safety in Industry as a Business Value

Benefit | Impact on Enterprise |

|---|---|

Legal Compliance | Zero-friction entry into regulated markets (EU, USA, China). |

Brand Integrity | Elimination of headline risks associated with biased or rogue AI. |

Operational Uptime | Fail-safe defaults ensure systems remain stable during sensor/data anomalies. |

Innovation Velocity | Standardized safety building blocks accelerate the R&D cycle. |

Essential Technical Controls for 2026

To ensure AI Safety in Industry, we implement a three-tier technical defense:

Red Teaming: Proactively breaking the system via prompt injection to find logic gaps.

Model Drift Alerts: Automated flight controllers that notify our DevOps service teams the moment a model's accuracy begins to decay.

Human-in-the-Loop (HITL): Maintaining a manual Kill Switch for any autonomous action involving high-value assets or human safety.

Conclusion: Trust as a Market Differentiator

In high-stakes industries, safety is the foundation. AI risk management in regulated industries requires prioritizing transparency and reliability. Whether you are scaling Generative AI Services or deploying agentic AI use cases 2026, your foundation must be Safe-by-Design. AI safety in healthcare, fintech, and autonomous systems is the benchmark for the late 2020s.

Explore our AI Safety Regulatory Compliance framework to see how MoogleLabs can secure your AI Safety in Industry project.

Loading FAQs

Please wait while we fetch the questions...